You’re Preparing for the Wrong Kind of AI Interview

AI interviews score differently, ghost more, and disclose less. A practical guide to navigating the process without losing your leverage.

I’ve been recruiting for long enough that I remember when “pre-screening” meant a phone call with an HR coordinator or recruiter who was clearly reading from a script and trying to get off the call faster than you were. The questions were standardized, the tone was perfunctory, and everyone involved understood it for what it was: a box to check before the real thing. Nobody pretended it was a meaningful conversation.

The pre-recorded video interview is that same box, rebuilt with better technology and worse transparency. And a lot of candidates are treating it like something it isn’t.

The advice circulating online right now, dress professionally, look at the camera, smile, practice your STAR answers, applies to human interviews. Some of it applies here too.

But the part that most advice skips is structural: a pre-recorded AI-scored video interview or AI avatar interview is a different evaluation format with a different audience, different scoring logic, and different failure modes than a conversation with a person. If you prepare for them the same way, you’re solving for the wrong variable.

According to Greenhouse’s 2026 Candidate AI Interview Report, which surveyed 2,950 active job seekers across five countries, 63% of US job seekers have now been interviewed by AI. That’s a 12-percentage-point increase from six months prior. This thing is not coming. It’s here.

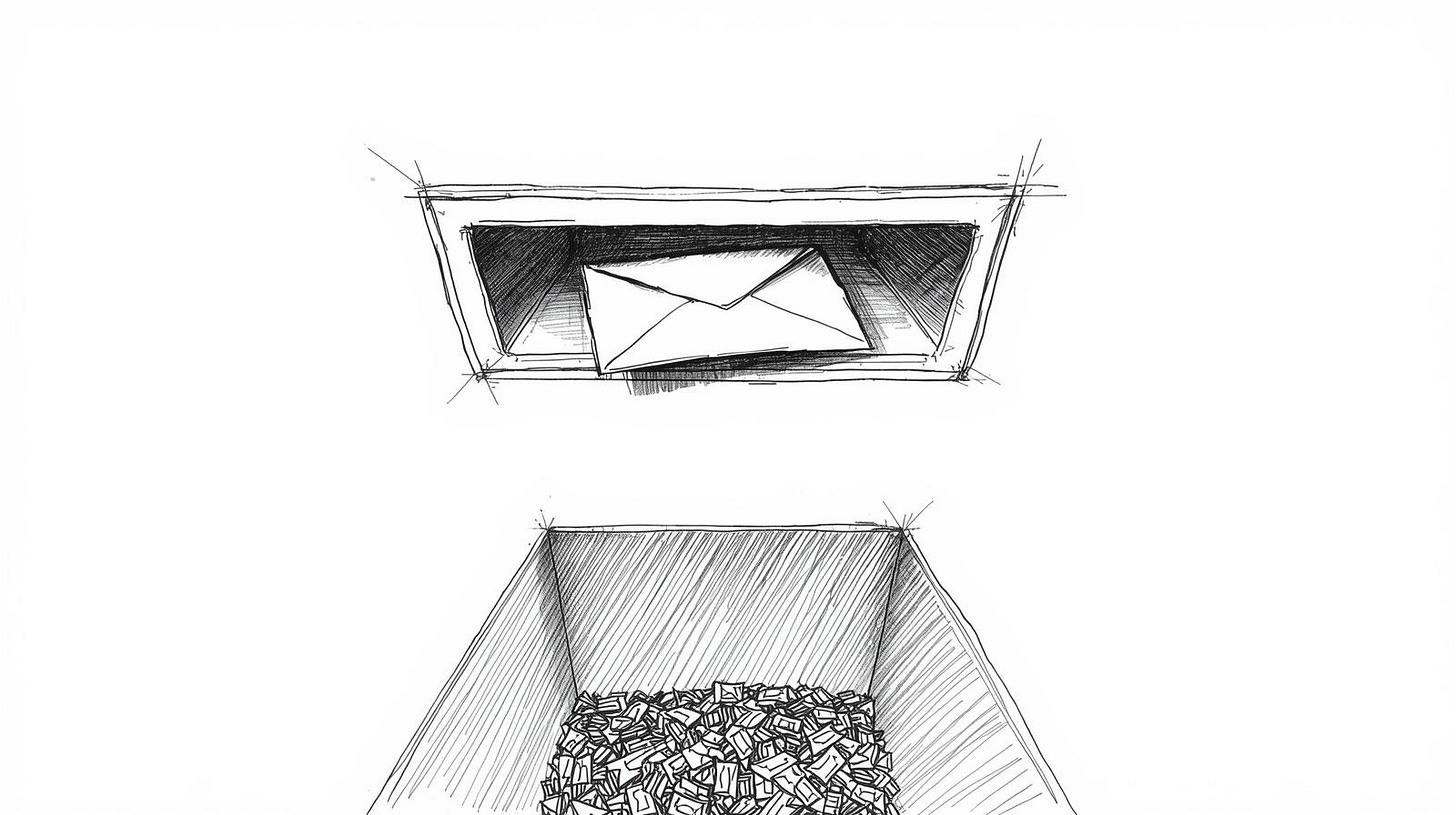

What’s also here, though less discussed: the outcomes are bad. In that same survey, 38% of US candidates who completed an AI interview never heard back at all. More were ghosted than were formally rejected (13%) or moved forward (28%). You can do everything right, show up, record, submit, and statistically you’re more likely to vanish into silence than to get any response, in any direction.

So before we talk about how to prepare, it’s worth being clear about what you’re preparing for.

The format you’re walking into

A pre-recorded AI video interview is not a conversation. Nobody is watching in real time. You get a link, usually delivered as text or audio, and a countdown timer. You record your answer. You can’t ask for clarification, can’t read the room, can’t adjust based on a frown or a follow-up question. The feedback loop that human conversation relies on is entirely absent.

What the system is scoring varies by vendor. Some tools analyze the words you say, vocabulary, structure, relevance to the prompt. Others analyze delivery: speech pace, pauses, filler words. Some vendors have moved away from facial expression scoring after a wave of legal complaints, but not all of them have, and you won’t necessarily know which kind of system you’re in.

In March 2025, the ACLU of Colorado filed a bias complaint against HireVue on behalf of a deaf and Indigenous woman who alleged the platform’s scoring worked worse for candidates outside certain demographic profiles, one data point in a longer pattern of litigation that has forced some vendors to be more transparent about what they’re actually measuring, and left others quiet.

The practical implication for preparation: you’re not trying to convince a person. You’re trying to be legible to a system. That means a few things most people don’t think about.

Structured answers matter more, not less. STAR format (Situation, Task, Action, Result) was designed for human evaluators who need to follow a narrative. AI scoring systems that analyze content do the same thing, they’re looking for a logical progression from problem to action to outcome. A wandering, conversational answer that a skilled human interviewer might find charming will likely score worse than a clean, organized one. Compress your instinct to be warm and be clear instead.

Pacing also matters differently. Human interviewers absorb pauses as thinking. AI scoring systems that flag speech patterns may treat unusual silence or excessive filler words as signals. Don’t rush, that creates a different problem, but be intentional. Record yourself once before you submit anything, not to polish your performance but to check whether you sound like you know where you’re going.

The live AI interview, where a conversational agent asks questions in real time, is a different format again. Here the advice tilts back toward the human interview end: you’re interacting, you can ask the system to repeat or clarify, the pacing is more natural. But the evaluation is still algorithmic. Treat it as you would a structured phone screen where someone is taking notes, professional, direct, not overly informal.

What they owe you before the camera turns on

In the Greenhouse survey, 70% of US candidates who experienced AI evaluation weren’t clearly told AI would be evaluating them before their most recent AI interview. That number should alarm you. You recorded yourself, submitted footage, had that footage analyzed by a scoring algorithm, and in most cases you had no idea any of that was happening.

There is legal movement on this. New York City’s Local Law 144, effective July 2023, requires employers hiring for roles based in or attached to NYC offices to disclose when an automated employment decision tool is being used in evaluation, conduct annual independent bias audits on those tools, and make audit results publicly available. Illinois has had a related law on AI video interviews since 2020. California’s civil rights regulations, effective October 2025, require meaningful human oversight for any automated system used in hiring decisions. Colorado has its own AI Act. The patchwork is messy, enforcement is inconsistent, and if you’re not in a covered jurisdiction, you may have no legal protection at all.

What this means practically: before you record anything, you can ask. You are not being aggressive or difficult. You can email the recruiter or contact listed in the invitation and ask: what system is being used to evaluate this interview, what is it measuring, and is a human also reviewing submissions? Most recruiters will answer this, though the answer is sometimes “I don’t know,” which is itself information.

The top ask from candidates in the Greenhouse survey to improve comfort with AI in hiring was having the option to request a human interview instead (46% of US respondents), followed by being told upfront that AI is involved (44%). That’s what candidates want. Whether companies deliver it is a separate question. But you can ask.

If the company is based in or hiring for a role in New York City, the disclosure obligation isn’t optional, they’re supposed to tell you, in the job listing or at least ten business days before the AI tool is used, that an automated decision tool is part of the process.

Bias, and the question I can’t answer

Something in the Greenhouse data stopped me. Candidates reported strikingly similar rates of perceived bias from both AI and human evaluators. For age: 36% of US candidates felt evaluated differently by AI because of age, and 36% said the same about human interviewers. For race/ethnicity: 27% for AI, 27% for humans. Gender: 24% for AI, 26% for humans.

The one dimension where AI scored better, at least in candidate perception, was appearance. 21% felt appearance affected their AI evaluation; 28% said the same about human interviewers. That’s the one category where the camera didn’t seem to hurt.

I’ve seen this data pattern repeated across multiple studies now. AI in hiring was sold partly on the premise that it would be less biased than humans, more consistent, less susceptible to gut feeling and in-group affinity. The actual evidence, at least from candidate experience, suggests it’s not less biased. It’s differently biased, and possibly just as biased overall. Age discrimination in particular appears remarkably consistent across markets in the Greenhouse data: ranging from 27% in the UK to 36% in the US, suggesting this isn’t a local artifact but something structural.

Whether AI bias is worse or better than human bias is genuinely contested territory. I’ve seen credible arguments in both directions. What I’m not willing to say is that AI interviews have solved the discrimination problem, because the numbers don’t support it.

There’s a separate conversation to be had about what, if anything, a candidate can do about this, and I’m going to skip it, because I don’t have a useful answer that doesn’t collapse into “hope for a better regulatory environment,” which is not prep advice.

After the silence

38% of US candidates who completed an AI interview were ghosted entirely. Another 13% were still waiting to hear back. That means more than half of people who showed up and completed the process got nothing.

When this happens, there’s a reflex to assume you failed the AI screen, that some algorithm looked at your speech pattern or your word choice and quietly declined you. Maybe. But you genuinely don’t know. The ghosting rate after AI interviews is so high, and so consistent across markets (39% in Australia, 42% in the UK), that it’s not primarily a signal about candidate quality. It’s a signal about employer process quality.

A few things are worth doing after the silence, not because they’re likely to work, but because they clarify your situation.

One follow-up email, sent to the recruiter or hiring manager if you have their information, is reasonable. Send it about a week after your submission. Keep it to two sentences: you completed the interview on [date] and wanted to check on timing. If you don’t hear back from that either, you have your answer, and the answer is “this company doesn’t prioritize candidate communication,” which is useful information about the company.

What you should not do: spend significant time trying to reverse-engineer what the AI scored you on, or seek “feedback” on your AI interview performance. Companies that use these systems almost never provide individual feedback, partly because the scoring models are often proprietary and partly because feedback creates legal exposure. You’re unlikely to get anything useful, and you’ll spend energy that belongs on your next application.

The harder part: recalibrating your job search after repeated silence isn’t just a process problem. It’s psychologically corrosive. I’ve watched people conclude from these experiences that they’re unemployable, and the data doesn’t support that conclusion. They completed a screen and received no response, which tells you nothing about their qualification.

Referrals bypass this funnel. If you have a connection inside a company you’re serious about, that connection is worth more than a clean AI interview, because it gets a human looking at your file before the algorithm does.

Whether to walk away

More than a third of US candidates (38%) have already withdrawn from a hiring process at least once specifically because it included an AI interview. Another 12% say they would if required, meaning about half of US candidates are either walking away or prepared to.

I’m of two minds about this.

The reasons people withdraw make sense to me. The biggest one in the Greenhouse data: pre-recorded video interviews scored by AI with no human present, cited by 33% of US candidates who abandoned a process. That’s a real objection. You’re being evaluated by a system you don’t understand, with no opportunity to explain context or ask questions, with almost no accountability if it goes wrong. Walking away is a rational response to an opaque process.

But, and this is the part where my advice complicates itself, I’m not sure withdrawal is always serving the candidate’s actual interest. The jobs that use AI screens are disproportionately at companies large enough to have implemented vendor software, which means they’re often the jobs with better compensation, more defined career paths, more stability. If you categorically avoid any process with AI screening, you’re cutting off a significant portion of the job market. Whether that’s worth it depends on your financial runway, your risk tolerance, and how many alternatives you’re actively pursuing.

My rough framework: if a company uses AI as one step in a process that includes human review, and you’ve confirmed that a human will see your submission, that’s different from a fully automated process where you have no certainty that any person ever looks at your file. The former is annoying but navigable. The latter is harder to justify completing, especially if you’re also giving up personal data and video footage to a system whose scoring criteria you can’t verify.

Across all five countries in the Greenhouse survey, the option to request a human interview instead was the top comfort measure candidates named, from 41% in Germany to 49% in Ireland. If you’re going to engage with an AI process at all, asking about that option before you commit to recording is not unreasonable. The worst they can say is no.

One thing I’d push back on: withdrawing as a statement of principle without considering your actual circumstances. I’ve seen candidates in genuinely difficult financial positions decline AI screens out of a sense that the process was beneath their dignity. That’s a legitimate feeling. It’s also sometimes an expensive one.

The decision is yours. But make it with clear eyes about what you’re giving up, not just what you’re refusing.

There’s a question sitting under all of this that I keep circling: does any of this preparation actually move the needle, or are AI interview outcomes mostly determined by factors outside your control, your voice, your accent, how you were trained to speak, whether the system was designed with your demographic in mind? I don’t have a clean answer. The honest one is probably “both, and we can’t always know which.”

I’m also aware that the regulatory picture is shifting fast enough that some of what I’ve written about disclosure requirements may be outdated by the time you read this. The law in this space is genuinely unsettled. State and country laws varies widely. Ask the company what applies to them, and if they don’t know, that’s useful to know too.

How to request a human interview (and when it’s actually worth asking)

There’s one thing it didn’t cover, because it belongs here: what you can do before you ever open the recording tool to change the terms of your evaluation. It’s not a hack. It’s a request. And most candidates either don’t know they can make it or make it in exactly the wrong way.